I am an audio-visual artist, specialised in video sampling and visual music, working professionally since 1995. On this website I selected 100 projects that tell my story, from 1985 to 2021. Below I summarised this story and link to many of these projects. You can browse all 100 projects at the bottom of this page. My main project is EboSuite, an audio-visual instrument I am working on with EboStudio since 1995. Read more about the history of that instrument here.

Beginnings

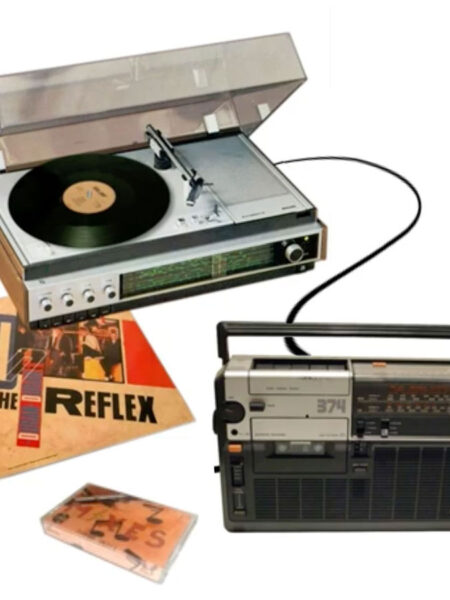

My fascination for audio-visual art started in the mid 80’s, collecting music videos and animations from MTV and other TV shows. I was surprised when I learned that musicians weren’t involved much in the making of their own music videos. Using the cassette-recorder and turntable of my parents, I created music remixes and collages (so called ‘pause-tapes’). I was not so much interested in creating nice sounding music, but was inspired by the freedom of sampling, the freedom to chop and combine any sound in any way, and the new form of music that produced.

In 1986 I bought a Midi-Set and in 1987 a sampler. Inspired by the visual style of hip-hop and acid-house culture and the music video of ‘Beat Dis‘ by Bomb the Bass in particular, I produced a track in 1988 in which all leading samples were video samples, called Tragic eRRoR. I didn’t have equipment to make video edits, so I had to imagine the visual side of the composition in my mind. Since then, I started looking for ways to produce images and sounds at the same time.

Together with friends I produced a few ‘Amiga AV Demos’ in 1989 and 1990. I made the music and visuals and my friends made the software to play them as a visual ‘slideshow’ with music. One demo was used to promote the Commodore Amiga in our local computer store, Game World. My first public performance.

I learned a lot about making music and visuals and when I got a second Amiga I was able to make more complex tracks. I joined a hip hop posse and made music for a fashion show.

Video sampling

In 1991 I entered the school of arts to study Image and Media Technology. This school had a video digitizer, so I was able to make my first video samples. I also learned the basics of programming visual effects. A very interesting time.

I scored a small club hit with my song ‘Runn into jaZz’ in 1993 and this enabled me to buy more professional equipment. Around this time I started to use the name ‘Eboman’. In 1995 I did my first performances as Eboman and formed the Sample madbanD. I did many crazy, energetic and fun performances with this band.

That same year I combined my audio sampler with an Amiga computer to create an audio-visual sampler. This enabled me to work with audio and video at the same time, in real time! I could use my music software to make videos live.

One of the first compositions I made with this set-up was ‘GarbiTch’. This was an instant hit in my live shows. At the same time I scored an international club hit with ‘Donuts with buddah’. I also created a video sample interview with artist/director Chris Cunningham.

Audio-visual effects

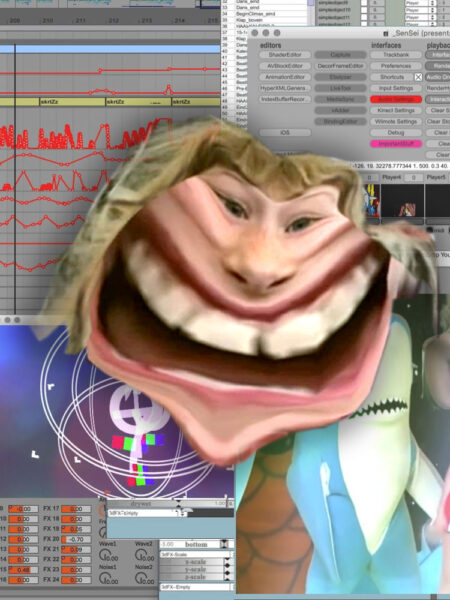

Audio-visual effects play an important role in my work. The success of Donuts with Buddha, my video sample tracks and the live shows gave me the opportunity to take my technical development more seriously and to buy better equipment in 1996. This enabled me to make more complex compositions and to use audio-visual effects. The skrtZz technique enabled me to use audio-visual effects in real time.

In 1997 and 1998 I bought an advanced music production set-up. This set-up allowed me to go much further with audio effects and mixing. With this set-up I produced the Meet_me@samplemadnesS.net album in 1998.

skrtZz + Live video

Fort Ebo GoLd, Hardcore madnesS, DVJ 1.0, DVJ 2.0 and Violent Entertainment made me realise that professional video editing and compositing software was not usable to create my art. That software is too slow, doesn’t support a musical timing and is not suitable for improvisation. I missed software to work with audio and video at the same time, in a musical way, like the Amiga/S760 set-up. So I started looking for alternatives.

For the dDrive must be a MAdMaN movie I experimented with many different techniques to improve my workflow. I used Image/ine and Xpose to produce my compositions live.

In 1998 I developed the skrtZz technique to work with video in a more creative, flexible and musical way and to be able to work with audio-visual effects in real time.

In 2000 I produced the DigitaL skrtZz worLd champion album to improve my skrtZz skills. Around this time I experimented a lot with the Chaos Funk concept as well to improve my musical timing.

SenSorSuit + DVJ

In 1999 I developed the SenSorSuit. I used metal door hinges in my armpits and elbows, DJ faders between my fingers and buttons on the ground to track my body motion. With this motion tracking suit I was able to control audio-visual effects and videos live on stage. I used my EboNator software and Image/ine to perform with this suit on several screens simultaneously.

Because my SenSorSuit made my shows more complex, I also started performing as DVJ in 1999. I combined different audio-visual techniques and merged the sound of drum ’n’ bass with other dance music styles to create energetic and hectic DVJ tracks. Tracks that I didn’t use for DVJ shows are collected in the Adventures in basS project.

I didn’t like the static workflow of existing video edit and effects software, so I created large parts of the DVJ tracks with my SenSorSuit live and improvised.

Audio-visual instruments

In 2001 I started developing my own audio-visual software, because software to work with music and visuals at the same time still didn’t exist. In 2003 I decided to make all of my future work with my own, self developed software only. My compositions became much less complex, because my software was pretty basic at first. But this decision encouraged me to develop better tools, since I really depended on them. I wanted to start from scratch and re-build my working process with instruments optimized for my artform: video sampling and visual music.

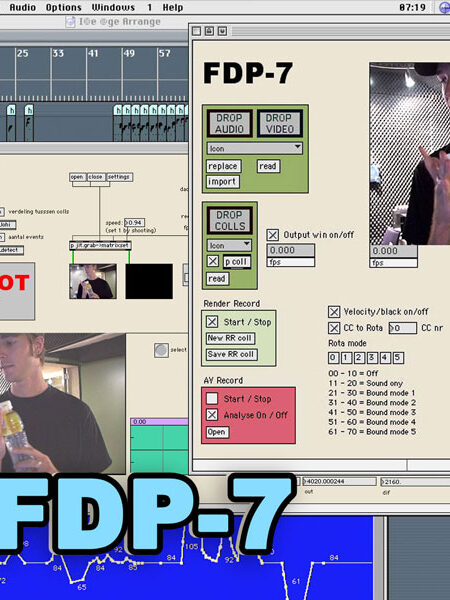

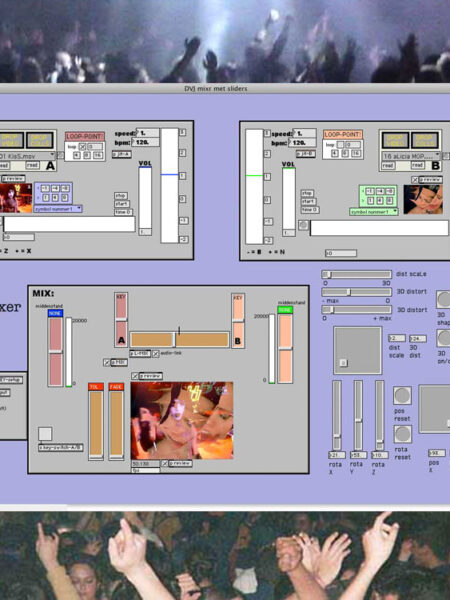

I developed many experimental AV instruments, but the skrtZz pen, the Frame Drummer Pro and the DVJ mixer were the most useful tools and opened up many new ways to produce and perform audio-visual tracks.

I claimed www.dvj-tools.com to make these tools available for everybody. But it would take another 14 years to realise that goal (when EboSuite was launched ).

Composition assistance

To optimise my artistic workflow I started developing composition assistance software in 2004. This software creates interesting visuals and video edits/mixes automatically using templates, presets and algorithms. With a few controls videos are selected, sliced, mixed and distorted automatically. This had a big influence on my audio-visual work in 2004 – 2006. Tracks from that era are pretty crazy and glitchy, because they were created live for most part.

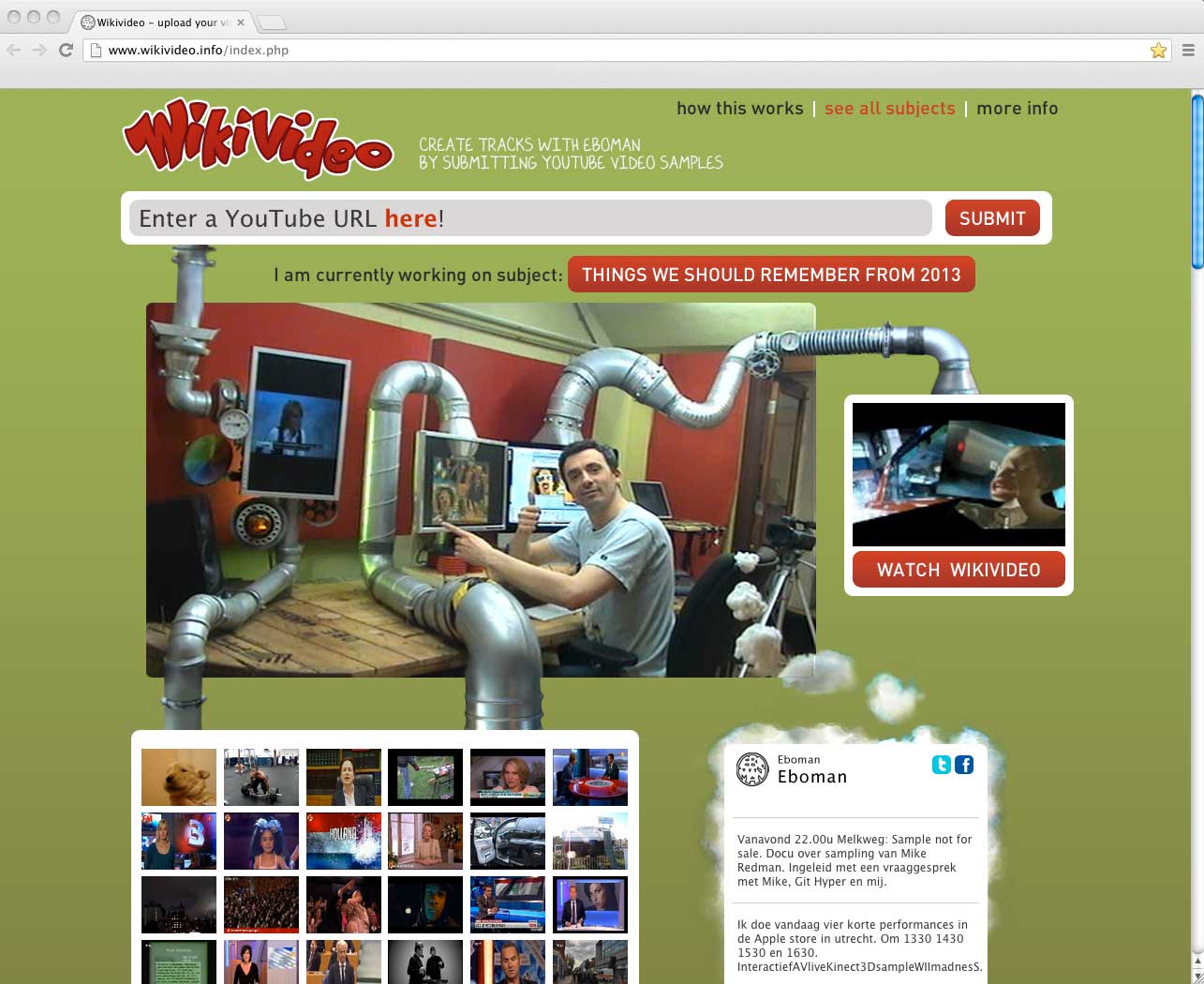

This technology makes it much easier to improvise and to respond to feedback from the audience during a live show. This faster workflow also helps to achieve greater artistic output (i.a. useful for social media). I call these quickly produced tracks ‘disposable video’ (DVJ 3.0, LLib LLik, Viva La Creacion, Doc vs Doc, Track in a day, Happy CarCrashing and WikiVideo are examples of disposable video projects).

EboStudio

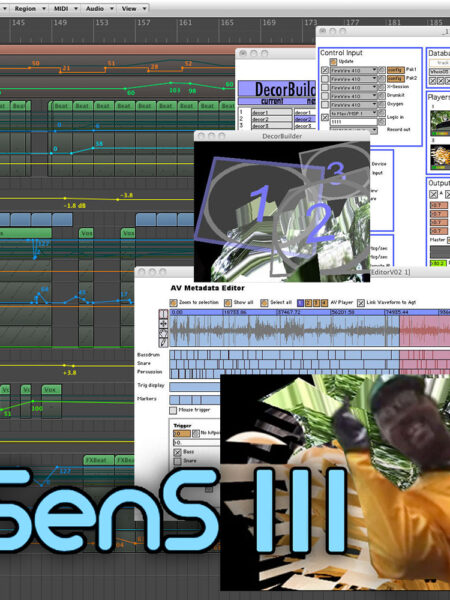

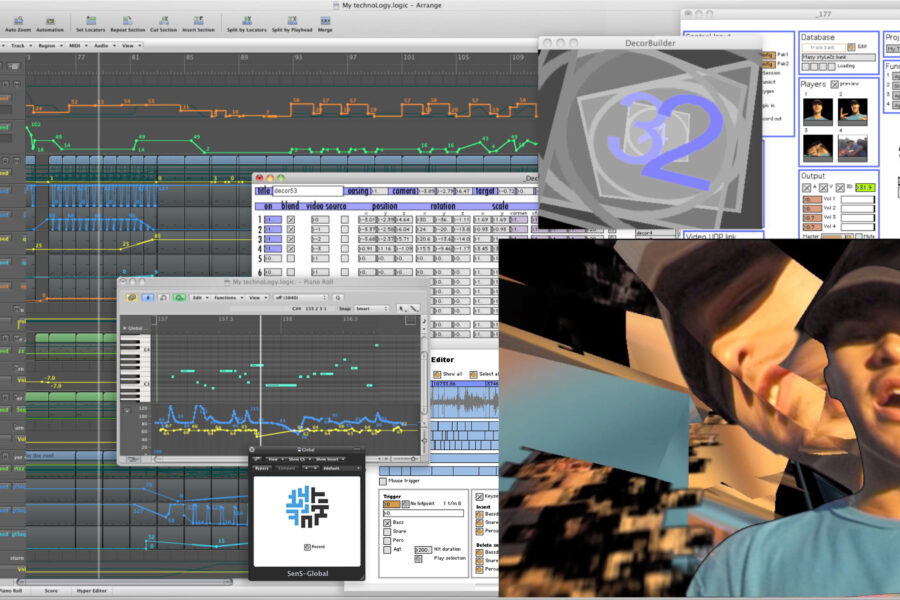

In 2004 I combined the most successful instruments into one, overall system called SenS. This made it easier to use all creative features to the fullest. In 2004 computer hardware was still pretty limited, but in 2009 this system had grown into a full blown audio-visual production suite, merging the creative processes of making music and making visuals into one, unified creative process. Ideal for audio-visual and visual music artists like me.

To do this, though, I became dependant on my technical team. To keep this team together and to create a solid basis for them to experiment, learn and develop themselves into audio-visual technology specialists, I had to do more commercial work, for example for fashion shows, conferences and branding events. For this I set up EboStudio (called SmadSteck initially). This had a huge impact on my artistic work. My artistic choices had always been heavily influenced by technology (and copyright restrictions), but since 2006 also by my entrepreneurship.

Live sampling

A successful artistic and commercial concept was the live video sampling concept. Between 2004 – 2012 I did many shows in which I recorded the audience live to create tracks on the spot. Most of these shows were commissioned by commercial companies and creative agencies.

Real time motion graphics

Another successful technology was the real time motion graphics and 3D video mix software of SenS. In 2008 computer hardware was not capable of mixing and distorting many videos at the same time or to generate complex visuals in real time while running music software. The real time motion graphics software of SenS enabled me to create appealing visual compositions in 3D without demanding too much from the computer to mix videos, visualise music and to customise the show to the theme of the event.

Interactive real time motion graphics

In 2010 I developed Senna. Senna is a ‘professional’ audio-visual instrument for children. The same technology as SenS, but with a very user-friendly interface. Like an interactive children’s book, learning children step-by-step how to make an audio-visual compostion and do an AV performance. Composition assistance technology helps children to make a cool composition fast, in a playful way.

For this I made the real time motion graphics software of SenS interactive. This means that all visual elements in the composition are clickable to trigger an action, like loading a video, jumping to another part of the composition, removing graphics, changing effects etc. For Senna I used this technology to make the interface.

WikiVideo + HyperVideo

Audio-visual art is a powerful medium to communicate a message and to tell a story. It is amazing that there is now an unlimited supply of videos on the internet that can be used to tell a story. But the quantity of videos is so great, and their diversity so huge and ever-changing, that it is not realistic to expect any single individual to overview all of them.

In the WikiVideo project (2011), I produced compositions in cooperation with the general public. They helped me finding interesting videos through the WikiVideo website. I customized my studio and SenS (then called SenSei) to be able to this in real time. An earlier WikiVideo project is the Viva La Creaçion project (2007) for which I was granted an international Webby Award.

I developed a special video player for the publication of these WikiVideos: the HyperVideo Player. A HyperVideo is a video in which you can click on all the different elements in the video collage. Clicking on these elements (in this case, the video samples and the graphics) refers the user on to other places on the internet, for example texts in Wikipedia, other videos, playlists, websites, etc.

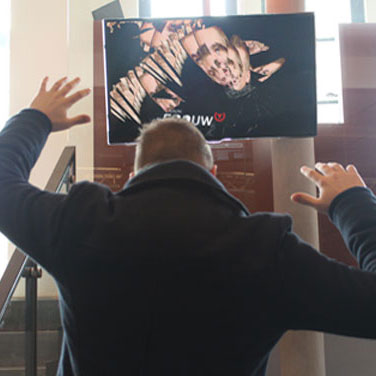

Augmented Stage

In 2011, I created Augmented Stage for my performances. With Augmented Stage I can literally step into the audio-visual composition on the screen (using a 3D camera), walk around in this artistic, virtual world and interact with all videos and graphics live. I can walk around Michael Jackson on stage, interrupt a television show, be the drummer in a music video, jump between explosions, move objects, throw away a logo, etcetera, … the possibilities are limitless.

Augmented Stage also controls the software I use to run the show (SenSei and Ableton Live). When I touch the videos/graphics I can start/stop or jump across the sequence, control audio-visual effects, trigger videos, record video, etcetera.

The audio-visual composition is not only my art, but also the interface to control the show!

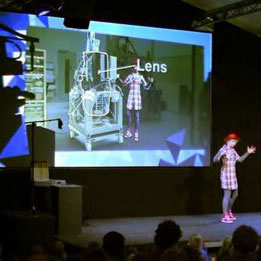

While doing so, the audience can follow all the action easily on the video screens. I am no longer just an audio-visual performer, hidden behind my computer, but also an actor playing a part in my compositions live. It is also great for presentations, theater and interactive installations.

Interactive Tracks

In 2012 I started the Interactive Tracks project. Interactive Tracks are interactive audio-visual compositions (iOS apps) with integrated creative functions to remix and personalize them. Tips and instructions, integrated in the track, help the user during this process (like Senna). The resulting personalized track can easily be shared on social media. This is a new way for artists to promote their work.

I started the Interactive Tracks project for two reasons: to create a better way to release audio-visual work and to finance the re-writing of the SenS software into release-worthy software. An important goal for me since 2004 was to make my technology available for anybody. But building rock-solid, releasable software is a slow and costly process. Producing different Interactive Tracks enabled me to step-by-step, app-by-app, translate the SenSei concepts into releasable software and grow towards a complex audio-visual instrument that is now EboSuite.

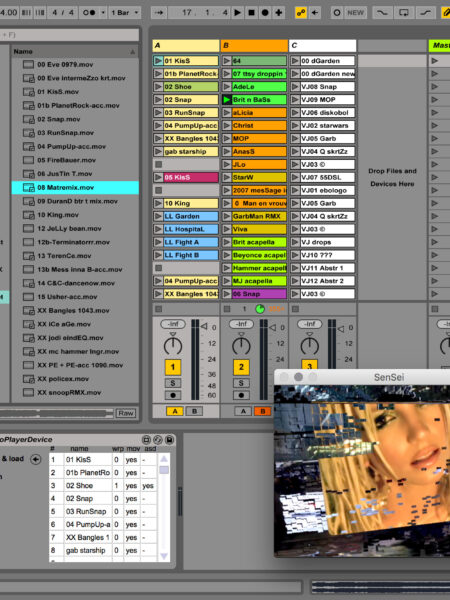

EboSuite

In 2017 EboSuite was launched! I had been looking forward to this moment for a long time. Finally the instrument I had been working on with my team for many years was available for everybody to use. EboSuite is a collection of plug-ins that turns Ableton Live (popular music production software) into an audio-visual instrument. The aim of my software is to create the ultimate audio-visual instrument that merges the creative processes of making music and making visuals into one, unified creative process. Ideal for audio-visual and visual music artists like me. You should try it out yourself!