In 1998, I developed the skrtZz concept to work with video in a more creative, flexible and musical way.

Concept

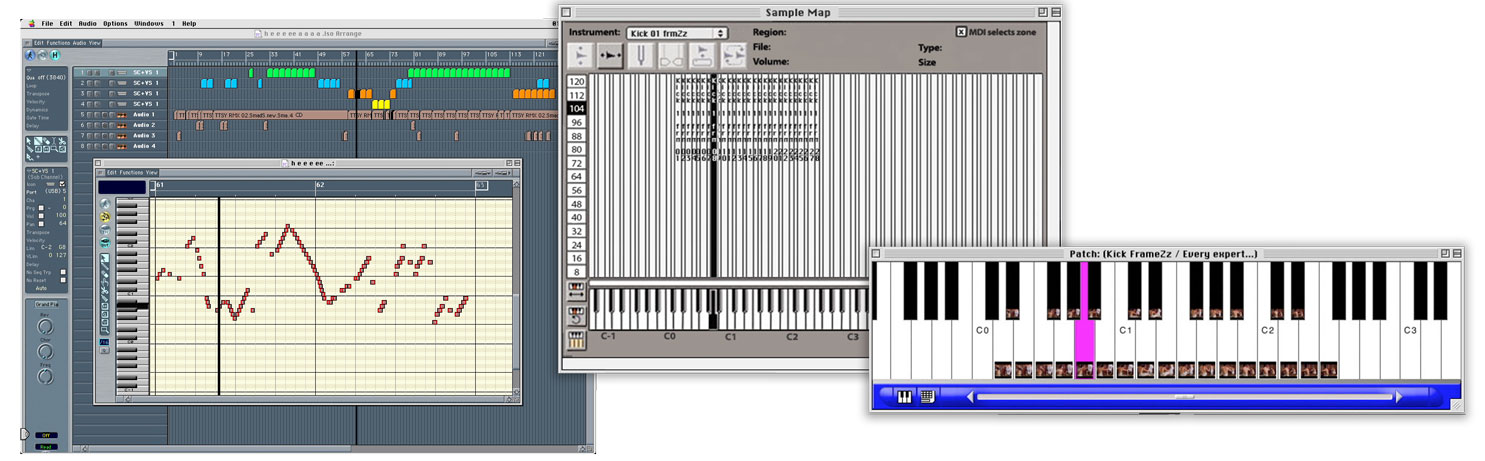

To skrtZz a sample, the sample is cut up in slices of 40 milli-seconds (duration of a video frame). These slices are placed chromatically on a MIDI keyboard. Playing the slices chromatically shows the original sample. Starting on a higher note on the MIDI keyboard changes the start-point of the sample. Playing the MIDI notes in reverse order, plays the sample in reverse. Placing the MIDI notes closer to each other speeds up playback and placing notes further from each other (filling in the gaps with repeated notes) slows down playback without the pitch of the sample changing, simular to granular synthesis (real time).

Combining these techniques made it easy to create interesting rhythmical, glitchy patterns and to play an improvised solo, simular to hip hop-style scratching. Changing the pitch of the slices makes the scratch sound more realistic and opened up even more creative possibilities (Digital skrtZz worLd Champion focusses on this idea).

Real-time video skrtZz-ing

This concept works great for video and for audio samples. To do this with video samples, I synced the Arkaos X-pose visual sampler software to my Sample Cell audio samplers. X-Pose didn’t support audio playback, but linking it to Sample Cell through MIDI solved that issue (in a simular way as I did for my first home made video sampler and EboSuite’s eSimpler). A great feature of X-Pose was that you could render a MIDI pattern to a new, self-contained movie. I could go fully nuts with wild MIDI patterns and then Ioad the MIDI file into X-pose to render it to a new movie file, without me having to do any extra video editing. Super nice! EboSuite’s eComper uses a simular concept to create a video from a MIDI file.

‘Real-time’ audio-visual effects

To take this concept a step further I used audio-visual samples with pre-rendered audio-visual effects. In this way I could control audio-visual effects in real-time. Even the timing of complex effects was easy to control live this way, without latency! I would distort the audio track of a video sample with effects (often using Hyperprism) and visualize those effects by distorting the video track with visual effect software (Adobe After Effect usually) and render those two tracks together into a new movie. Usually I made sure the pre-rendered AVFX in the sample had a simple, logical development over time, starting with no effect and then adding more effects as time progresses. This way, I could easily move from a ‘clean’ visual/sound to the distorted visual/sound, resulting in smooth, natural sounding/looking skrtZz compositions.

Compositions

I used this concept in many projects, like d Driver must be a MAdMaN. I focussed on audio-only skrtZz-ing in the Digital skrtZz worLd champion project to dive deeper into hip hop turntablist-style skrtZz-ing. The skrtZz concept is one of the concepts that led to the development of the SenSorSuit and the skrtZz pen.

The skrtZz technique is also used on the final track of the Trap to skiLL you E.P.